Design of Scalable Storage Systems

Published in ACM SIGMETRICS'22, ASPLOS'23, IEEE CAL'24, IEEE CAL'26

This is one of themes of my research work in the past six years, and is still ongoing.

With the exponential data growth every year and the need for faster storage systems, designing scalable storage systems is of utmost importance. In this project, we attack the scalability problem from different aspects to design more scalable storage systems. Typically the core features of a storage system which are the ability to increase the number of storage devices, providing high performance data reduction technology, handling I/O caching at extreme rates are critical. In this regard, we have done multiple sub-projects and published the results in top venues including ACM SIGMETRICS’22, ASPLOS’23, IEEE CAL’24 and CAL’26. In the following paragraph, I provide brief summary for some of these published work.

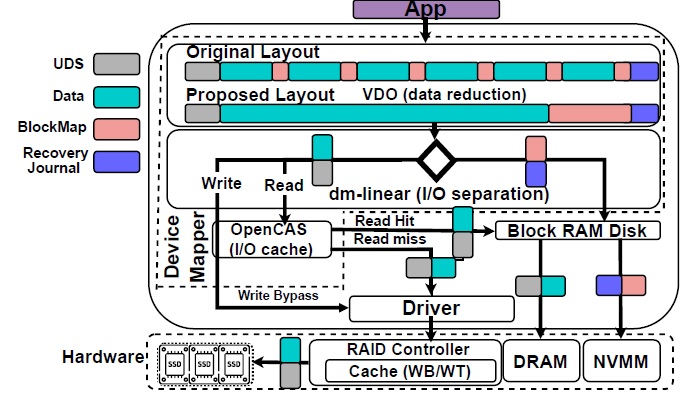

Our SIGMETRICS’22 WORK: All-flash storage (AFS) systems have become an essential infrastructure component to support enterprise applications, where sub-millisecond latency and very high throughput are required. Nevertheless, the price per capacity of solid-state drives (SSDs) is relatively high, which has encouraged system architects to adopt data reduction techniques, mainly deduplication and compression, in enterprise storage solutions. To provide higher reliability and performance, SSDs are typically grouped using redundant array of independent disk (RAID) configurations. Data reduction on top of RAID arrays, however, adds I/O overheads and also complicates the I/O patterns redirected to the underlying backend SSDs, which invalidates the best-practice configurations used in AFS. Unfortunately, existing works on the performance of data reduction do not consider its interaction and I/O overheads with other enterprise storage components including SSD arrays and RAID controllers.

In this paper, using a real setup with enterprise-grade components and based on the open-source data reduction module RedHat VDO, we reveal novel observations on the performance gap between the state-of-the-art and the optimal all-flash storage stack with integrated data reduction. We therefore explore the I/O patterns at the storage entry point and compare them with those at the disk subsystem. Our analysis shows a significant amount of I/O overheads for guaranteeing consistency and avoiding data loss through data journaling, frequent small-sized metadata updates, and duplicate content verification. We accompany these observations with cross-layer optimizations to enhance the performance of AFS, which range from deriving new optimal hardware RAID configurations up to introducing changes to the enterprise storage stack. By analyzing the characteristics of I/O types and their overheads, we propose three techniques: (a) application-aware lazy persistence, (b) a fast, read-only I/O cache for duplicate verification, and (c) disaggregation of block maps and data by offloading block maps to a very fast persistent memory device. By consolidating all proposed optimizations and implementing them in an enterprise AFS, we show 1.3× to 12.5× speedup over the baseline AFS with 90% data reduction, and from 7.8× up to 57× performance/cost improvement over an optimized AFS (with no data reduction) running applications ranging from 100% read-only to 100% write-only accesses.

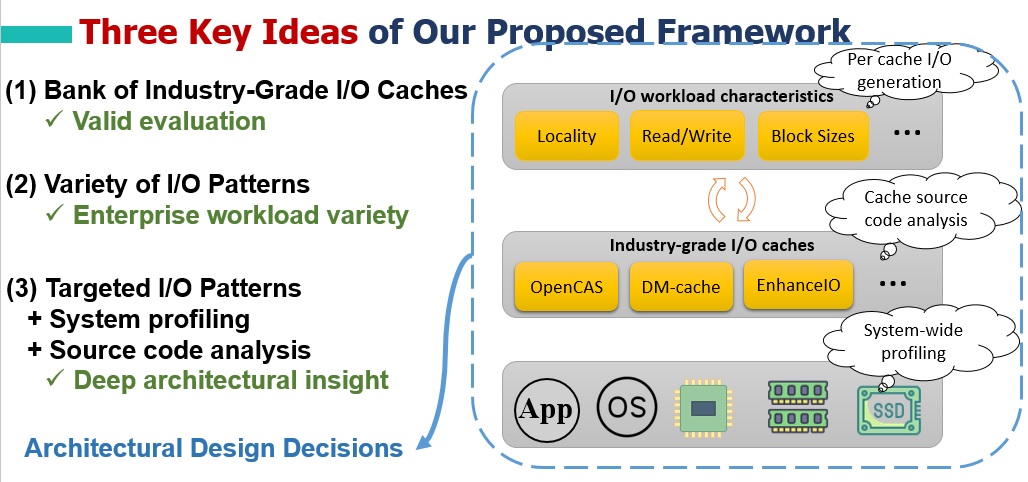

Our ASPLOS’23 WORK: I/O caching has widely been used in enterprise storage systems to enhance the system performance with minimal cost. Using Solid-State Drives (SSDs) as an I/O caching layer on the top of arrays of Hard Disk Drives (HDDs) has been well studied in numerous studies. With the emergence of ultra-fast storage devices, recent studies suggest using them as an I/O cache layer on top of mainstream SSDs in I/O intensive applications. Our detailed analysis shows despite significant potential of ultra-fast storage devices, existing I/O cache architectures may act as a major performance bottleneck in enterprise storage systems, which prevents taking advantage of the device full performance potential.

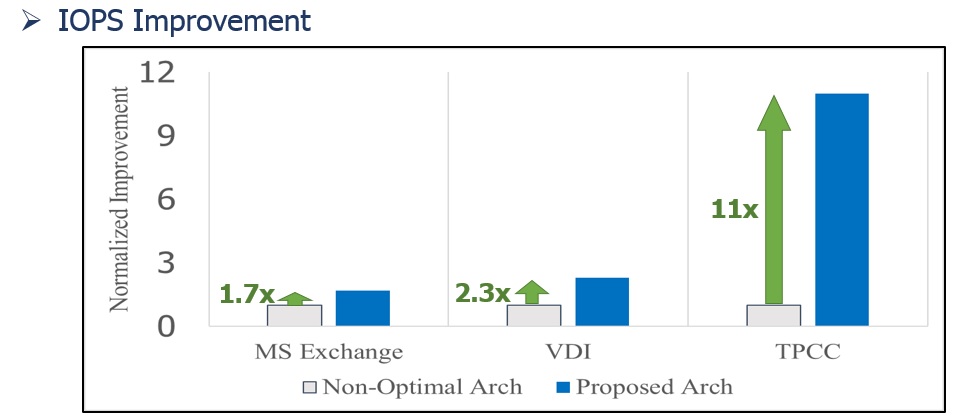

In this paper, using an enterprise-grade all-flash storage system, we first present a thorough analysis on the performance of I/O cache modules when ultra-fast memories are used as a caching layer on top of mainstream SSDs. Unlike traditional SSD-based caching on HDD arrays, we show the use of ultra-fast memory as an I/O cache device on SSD arrays exhibits completely unexpected performance behavior. As an example, we show two popular cache architectures exhibit similar throughput due to performance bottleneck on the traditional SSD/HDD devices, but with ultra-fast memory on SSD arrays, their true potential is released and show 5× performance difference. We then propose an experimental evaluation framework to systematically examine the behavior of I/O cache modules on emerging ultra-fast devices. Our framework enables system ar-chitects to examine performance-critical design choices including multi-threading, locking granularity, promotion logic, cache line size, and flushing policy. We further offer several optimizations using the proposed framework, integrate the proposed optimiza-tions, and evaluate them on real use cases. The experiments on an industry-grade storage system show our I/O cache architecture optimally configured by the proposed framework provides up to 11X higher throughput and up to 30X improved tail latency over a non-optimal architecture.

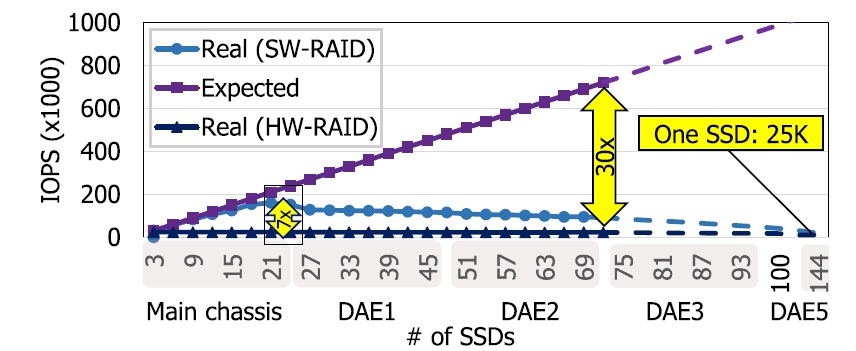

Our IEEE CAL’24 WORK: In this paper, we first analyze a real storage system consisting of 72 SSDs utilizing either Hardware RAID (HW-RAID) or Software RAID (SW-RAID), and show that SW-RAID is up to 7X faster. We then reveal that with an increasing number of SSDs, the limited I/O parallelism in SAS controllers and multi-enclosure handshaking overheads cause a significant performance drop, minimizing the total I/O Per Second (IOPS) of a 144-SSD system to less than a single SSD. Second, we disclose the most important architectural parameters that affect a large-scale storage system. Third, we propose a framework that models a large-scale storage system and estimates the system IOPS and system resource usage for various architectures. We verify our framework against a real system and show its high accuracy. Lastly,we analyze a usecase of a 240-SSD system and reveal how our framework guides architects in storage system scaling.

Our IEEE CAL’26 WORK: Storage systems in mission-critical environments require steady high performance all the time. When a Solid-State Drive (SSD) fails within a disk array, the array reconstruction starts and therefore, serving the application I/O request rate slows down. As our first contribution, we reveal that SSD arrays suffer from up to 3.3× drop of I/O per Second (IOPS) of serving enterprise workloads during SSD array reconstruction. To mitigate the array reconstruction problem, existing studies modify RAID algorithms, which make them inapplicable to many deployed SAN storage systems. Others propose predicting hot workload accesses during array reconstruction, and serving those addresses from a faster device. Unfortu-nately, we observe that such approaches mainly focus on short history, or have improper weight assignment for long history tracking, thus suffer from incomplete access predictions, and low acceleration opportunities. As the second contribution, we propose a novel I/O history pattern analysis, which applies proper weights for short- and long-term to maximize detection of repeated workload accesses during array reconstruction. We implement our proposed method and evaluate its effectiveness on a real system using enterprise workload I/O traces. Our proposed method predicts 80% more hot requests compared to state-of-art, which enables 27% higher average IOPS during array reconstruction.