ML Infrastructure and ML Workloads

Published in IEEE CAL'25

This is another theme of my research work in the past few years and is ongoing.

Machine Learning (ML) and Artificial Intelligence (AI) are common terms these days and everybody knows their importance. What of the critical aspects to make better ML services is to design or configure ML infrastructures better, and also learn more about ML workload behaviors. We have started this project and have published a paper in IEEE CAL’25 and more yet to come.

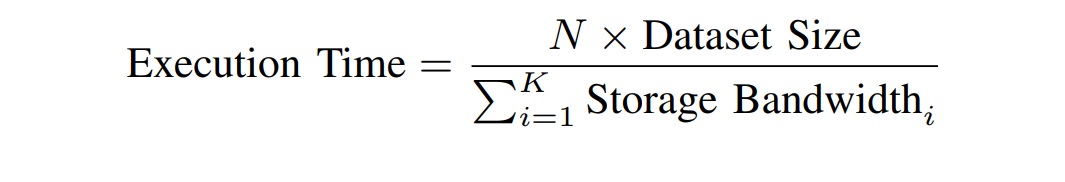

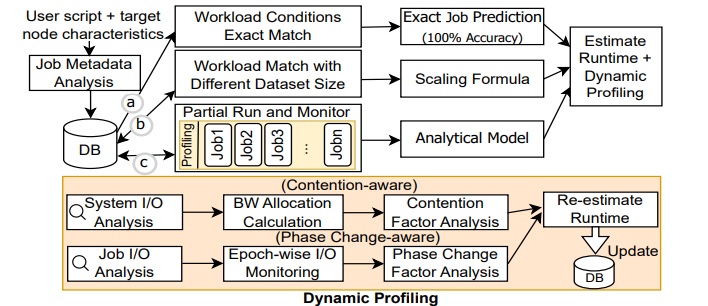

Overview of our work in IEEE CAL’25: Training Machine Learning (ML) models commonly rely on High-Performance Computing (HPC) centers or cloud servers that accommodate compute nodes with powerful resources. Users tend to enhance the accuracy of ML applications by continuous training, after model refinements, and dataset size increase. With such application changes, and heterogeneity of HPC nodes, knowing each job execution time in advance (i.e., prediction) is necessary for efficient job scheduling. We observe that I/O accesses highly influence the executing time of modern ML applications. Unfortunately, existing studies on estimating job execution time either (a) rely on overestimated user declared time, or (b) predict execution time mainly based on compute resources (ignoring I/O or storage effects), and use complex deep learning models for this purpose. In this paper, we propose a simple, yet effective method for predicting the execution time of ML training. Our approach explicitly accounts for I/O accesses as a critical factor. Our method combines (a) partial application execution & monitoring, (b) analytical modeling leveraging ML application characteristics, (c) dynamic re-estimation, and (d) simplified history-based analysis. Our evaluation on a number of Convolutional Neural Networks (CNNs) and Transformer models show that our proposed method predicts the execution time accurately (i.e., with error less than 8% for most cases) compared to actual execution.