CPU Performance Modeling and Simulator Validation

DiagSim (Publications ACM TACO'18)

This was one of the projects I contributed to when I was a PhD student at POSTECH.

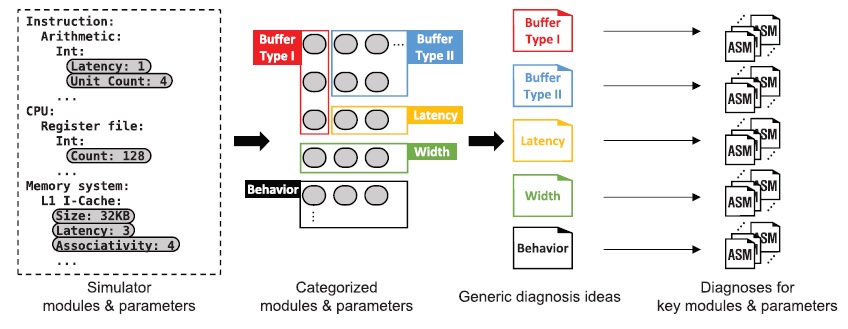

Brief Description: Modern CPU simulators are very complex, as some have over 200K lines of code. Thus, when implementing and evaluating a new microarchitecture in such simulators, users normally check only their modules of interest and modify them. However, hidden simulator details and inter-module interactions can affect the simulation results and provide incorrect insights to the simulator user. To detect such unexpected behaviors, we propose a framework which allows efficient and systematic diagnosis of simulators. Our framework consists of many microbenchmarks with generic models that run on a target simulator to check the behavior of various modules and quickly pinpoint the modules that show discrepancies. We have used our framework on 3 popular open-source simulators and discovered hidden details that could significantly impact the reported performance. This research resulted in a publications in ACM TACO’18.